In: Proceedings of the IEEE International Conference on Computer Vision, pp. Wang, T.C., Efros, A.A., Ramamoorthi, R.: Occlusion-aware depth estimation using light-field cameras. Tao, M., Hadap, S., Malik, J., Ramamoorthi, R.: Depth from combining defocus and correspondence using light-field cameras. In: Proceedings of SPIE Human Vision and Electronic Imaging (2012) Perwass, C., Wietzke, L.: Single lens 3D-camera with extended depht-of-field. Ng, R., Levoy, M., Bredif, M., Duval, G., Horowitz, M., Hanrahan, P.: Light field photography with a hand-held plenoptic camera. Indiana University and Adobe Systems, Technical report (2008) Lumsdaine, A., Georgiev, T.: Full resolution lightfield rendering. Lin, H., Chen, C., Bing Kang, S., Yu, J.: Depth recovery from light field using focal stack symmetry.

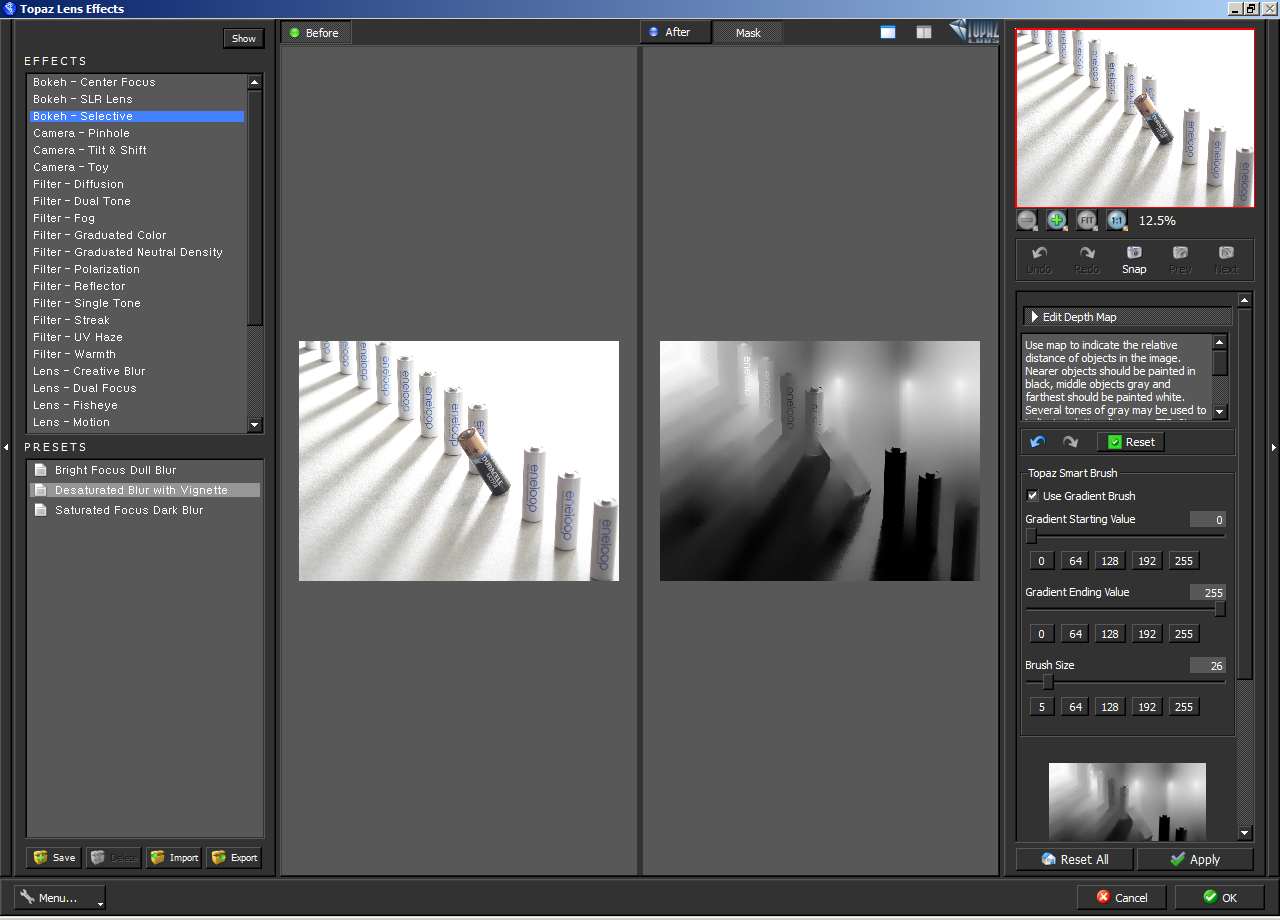

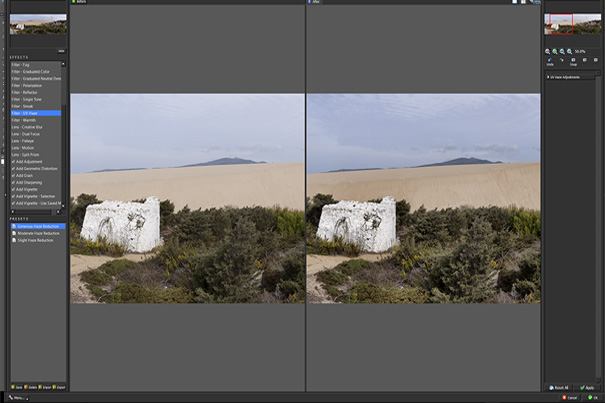

Liang, C.K., Ramamoorthi, R.: A light transport framework for lenslet light field cameras. Ives, H.E.: Optical properties of Lippman lenticulated sheet. III-549–552 (2004)įleischmann, O., Koch, R.: Lens-based depth estimation for multi-focus plenoptic cameras. In: Proceedings of International Symposium on Circuits and Systems, vol. 1–9 (2009)ĭansearau, D., Bruton, L.: Gradient-based depth estimation from 4D light field. In: Proceedings of IEEE International Conference on Computational Photography, ICCP, pp. This process is experimental and the keywords may be updated as the learning algorithm improves.īishop, T.E., Zanetti, S., Favaro, P.: Light field superresolution. These keywords were added by machine and not by the authors. Our method achieves an accuracy similar to the state-of-the-art in considerable less time (speedups of around 3 times). Tests with simulations and real images are presented and show a good trade-off between computation time and accuracy of the method presented. We then use lenses with different focal-lengths in a multiple depth map refining phase and their reprojection to the image plane, generating an accurate depth map per micro lens. The novelty about our approach is the global method to back project correspondences found using photometric similarity to obtain a 3D virtual point cloud.

In this paper, we introduce a fully automatic fast method for depth estimation from a single plenoptic image running a RANSAC-like algorithm for feature matching. Light field cameras capture a scene’s multi-directional light field with one image, allowing the estimation of depth.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed